|

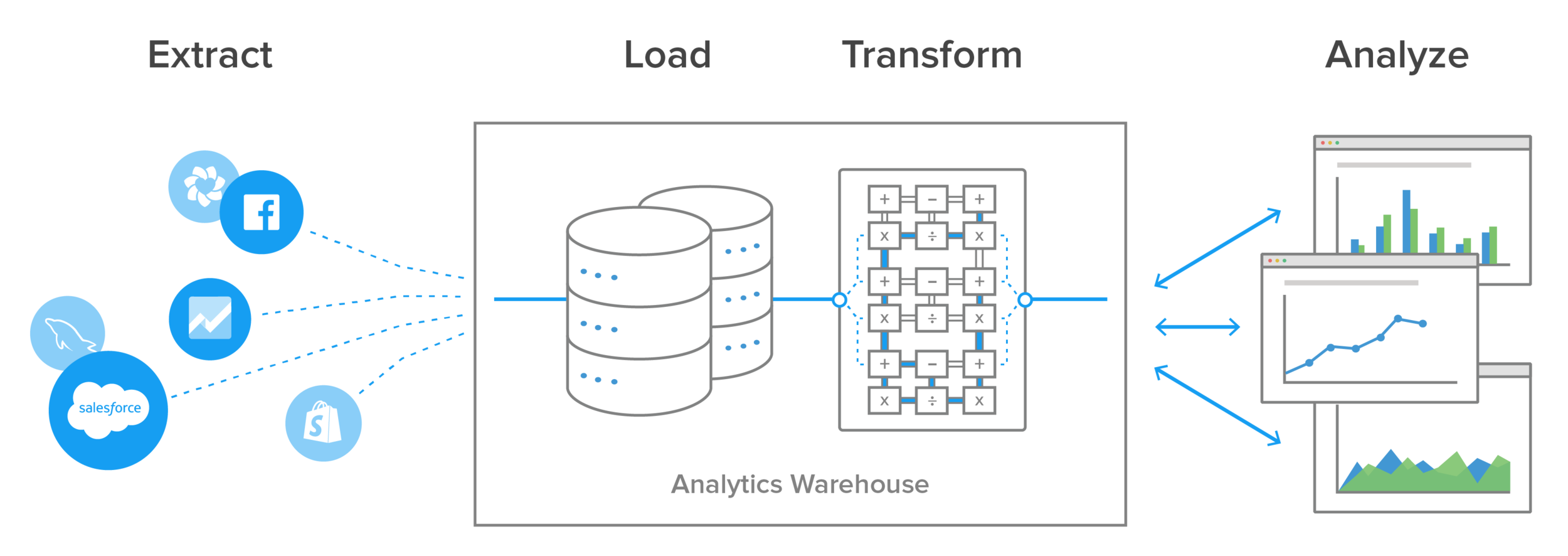

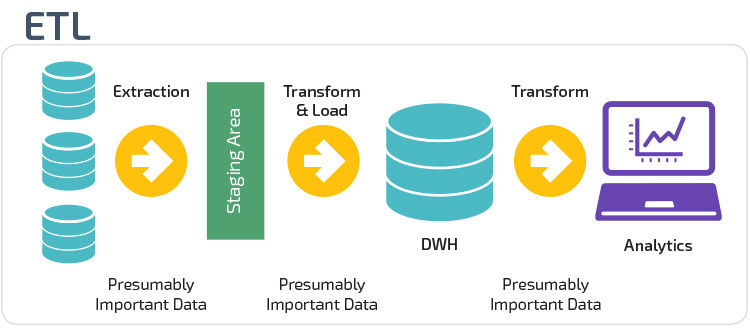

Some examples of these data sources include: Data that is extracted at this stage is likely going to be eventually used by end business users to make decisions. In this first step, data is extracted from different data sources. We’ll go into greater depth for all three steps below. In an ETL process, data is first extracted from a source, transformed, and then loaded into a target data platform. If you don’t talk about the benefits and drawbacks of systems, how can you expect to improve them? How ETL works It’s important to talk about ETL and understand how it works, where it provides value, and how it can hold people back. The same transformations can occur in both ETL and ELT workflows, the primary difference is when (inside or outside the primary ETL workflow) and where the data is transformed (ETL platform/BI tool/data warehouse). In many ways, the ETL workflow could have been renamed the ETLT workflow, because a considerable portion of meaningful data transformations happen outside the data pipeline. In ETL workflows, much of the meaningful data transformation occurs outside this primary pipeline in a downstream business intelligence (BI) platform.ĮTL is contrasted with the newer ELT (Extract, Load, Transform) workflow, where transformation occurs after data has been loaded into the target data warehouse. This ELT process can be repeated for other sources of data, allowing us to integrate and transform multiple datasets into a centralized data store.ETL, or “Extract, Transform, Load”, is the process of first extracting data from a data source, transforming it, and then loading it into a target data warehouse. In this example, we used Pandas to extract data from a CSV file, load it into a SQLite database, and then join it with customer data to enrich the dataset. merge (orders_df, customers_df, on = 'customer_id' ) print (enriched_df ) read_sql_query ( 'SELECT * FROM customers', conn ) # Join orders and customers dataĮnriched_df = pd. # Extract customer data from databaseĬustomers_df = pd. For example, we might want to join the orders data with customer data to get more insights. Transform: Finally, we transform the data as needed. to_sql ( 'customers', conn, if_exists = 'replace', index = False ) to_sql ( 'orders', conn, if_exists = 'replace', index = False )Ĭustomers_df. connect ( 'my_database.db' ) # Load data into database

In this case, we'll use a SQLite database.

Load: Next, we load the data into our data store. read_csv ( 'orders.csv' )Ĭustomers_df = pd.

For this example, let's assume we have a CSV file containing customer orders. Given two input files of customers.csv and orders.csv as follows: customer_id,name,total_ordersĮxtract: We start by extracting data from our source systems. Please note that you need to have the necessary Python libraries installed in your Python environment to run the code: Here's an example of an ELT process in Python using the Pandas library and SQLite3. The ELT process is similar to the more traditional ETL (Extract, Transform, Load) process, but with a key difference: data is extracted from source systems and loaded directly into a data store, where it can then be transformed.ĭagster provides many integrations with common ETL/ELT tools. ELT stands for Extract, Load, Transform, and is a process used in modern data pipelines for integrating and transforming data from various sources into a centralized data store.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed